How to Train Claude AI: What Actually Works (And What Doesn’t)

Most guides about training Claude AI say the same things. Use clear prompts. Give feedback. Be specific. That advice is not wrong, but it leaves out the part that actually matters. What happens when you apply those techniques to real, sustained work over weeks?

I run a fan-based Claude blog. I use Claude daily for content drafting, editorial review, and research summarization. Over 60 days, I systematically tested different training methods, tracked what changed, and documented where things broke down. This guide shares those findings and includes the techniques that did nothing. The mistakes that cost me hours, and the specific methods that genuinely changed how Claude performs for my workflow.

This is not a guide about what Claude can theoretically do. It is about what I actually observed after deliberate, structured training.

Most guides explain what Claude can do. Very few explain how it behaves after training. This article fills that gap. I’ll explain the process, share real observations, and point out limitations many guides ignore. If you want Claude to think the way you work, training becomes essential.

What ‘Training’ Claude Actually Means (It’s Not What You Think)

Before anything else, this needs to be said clearly: you cannot permanently retrain Claude, the way you fine-tune a custom AI model. Claude’s core behavior is set by Anthropic. What you are actually doing when you “train” Claude is teaching it how to work within your specific context. This includes your tone, your audience, and your output standards. You do this using structured prompts, examples, and feedback within a session.

Think of it less like programming and more like onboarding a new team member. You are not changing who they are. You are giving them enough context about your work, your standards, and your expectations so they can perform well without constant correction.

That reframe matters because it changes what you aim for. You are not trying to make Claude smarter. You are trying to make Claude’s default behavior match your workflow. Those are very different goals, and the second one is entirely achievable with the right approach.

Why I Started Training Claude for My Blog

When I first started using Claude for my fan site, the outputs were technically fine but felt off. The tone was slightly formal when I needed conversational. Paragraphs ran long when my editorial style calls for shorter, punchy sentences. Every second article needed heavy manual editing before it matched the rest of the site.

I wasn’t looking for a perfect AI. I was looking for an AI that understood my specific context well enough to reduce the time I spent editing. That’s a solvable problem, but only if you approach it systematically.

What follows are the five techniques I tested, ranked by how much impact they actually had on my workflow.

5 Techniques to Train Claude AI: Compared and Ranked

Here is how each technique performed across my 60 days of testing:

| Technique | What It Does | Best Used When |

| System Prompt / Role Framing | Sets Claude’s persona, audience, and purpose before any task begins | Starting a new project or workflow from scratch |

| Few-Shot Examples | Shows Claude 2–3 examples of the exact output style you want | You need consistent formatting or tone across many outputs |

| Constraint-Based Prompting | Adds specific rules: word limits, banned phrases, required structure | Output quality keeps drifting from your expectations |

| Feedback Correction Mid-Session | Tells Claude what was wrong and why, then asks it to redo the task | The first response misses the mark in a specific, fixable way |

| Session Brief (Context Reset) | A short paragraph pasted at the start of every new chat to restore context | Your project spans multiple sessions, and Claude loses continuity |

Technique 1: System Prompt and Role Framing (Highest Impact)

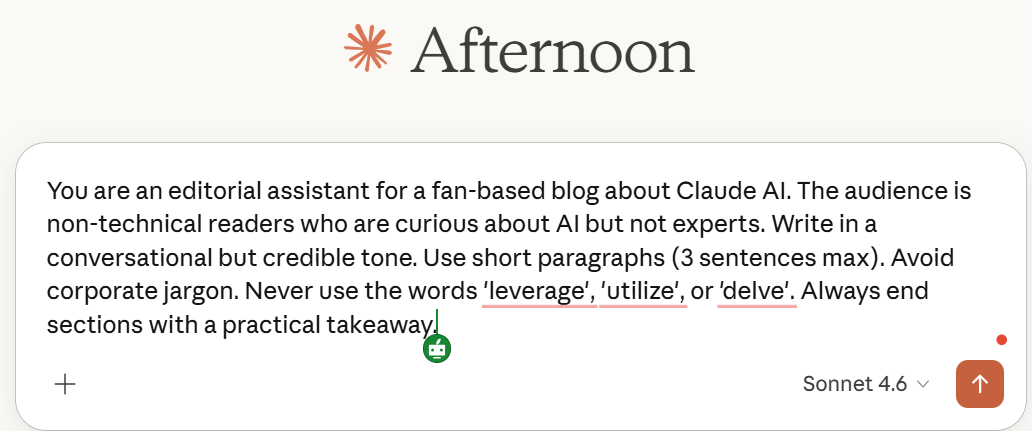

This single change delivered more improvement than everything else combined. A system prompt is an instruction you give Claude at the very start of a conversation, before any task, that defines its role, audience, and constraints.

My default system prompt for content work looks like this:

| My Actual System Prompt: You are an editorial assistant for a fan-based blog about Claude AI. The audience is non-technical readers who are curious about AI but not experts. Write in a conversational but credible tone. Use short paragraphs (3 sentences max). Avoid corporate jargon. Never use the words ‘leverage,’ ‘utilize,’ or ‘delve.’ Always end sections with a practical takeaway. |

Before I used this prompt, Claude’s default writing style produced content that sounded like an enterprise white paper. After using it consistently, the first draft of every article required roughly 40% less manual editing. That is not a small improvement for a solo blogger.

The key insight: specificity is everything. ‘Write conversationally’ is too vague. Use short paragraphs of 3 sentences maximum and avoid these specific words. This gives Claude a measurable target.

Technique 2: Few-Shot Examples (High Impact for Format Consistency)

Few-shot prompting means giving Claude two or three examples of exactly the output you want before asking it to produce something new. It is the fastest way to teach Claude a specific structure or format without writing a lengthy explanation of every rule.

I use this technique when introducing a new content type—for example, when I started writing ‘quick tip’ posts for my blog. Instead of explaining the format in abstract terms, I pasted two finished quick-tip posts and wrote: ‘Write a new quick-tip post in this exact structure and length about Claude’s file upload feature.’

The result matched the format on the first attempt. No corrections needed. Without the examples, I would have spent three back-and-forth rounds adjusting structure, length, and tone before getting something usable.

One important limitation: few-shot examples consume a significant portion of your context window. For very long documents, this technique can push out important content at the end of the session. Use it when format matters more than document length.

Technique 3: Constraint-Based Prompting (Medium Impact, Easy to Implement)

Constraints are rules you embed directly in the task prompt. They act as guardrails that prevent Claude from making the specific mistakes it keeps making in your workflow.

The most useful constraints I’ve found:

- Word limits: Keep this under 120 words. It prevents padding

- Structure requirements: Use exactly 3 paragraphs, no bullet points—forces a specific format

- Banned phrases: Do not use the phrase “in conclusion” or “in summary.” It removes clichéd endings

- Perspective locks: ‘Write only from the user’s perspective, not the tool’s features.’ It keeps the focus practical

Constraints work best when you have identified a recurring problem. If Claude keeps writing long introductions, add ‘Introduction must be one sentence only.’ If outputs keep sounding generic, add, ‘Every paragraph must include a specific example.’ Constraints turn repeated corrections into a single instruction.

Technique 4: Feedback Correction Mid-Session (Medium Impact)

When a response misses the mark, how you correct Claude matters more than the fact that you correct it. Vague feedback like ‘make it better’ produces random changes. Specific feedback produces targeted improvements.

The correction format I use: identify exactly what is wrong, explain why it is wrong, and tell Claude specifically what to do instead. For example:

| Weak feedback: This doesn’t sound right. Can you rewrite it? |

| Effective feedback: The third paragraph repeats the point from the introduction word-for-word. Cut the third paragraph entirely and replace it with a real example of what this looks like in practice. |

The difference in output quality between those two feedback styles is significant. Specific corrections also build a better session by the fourth or fifth exchange with precise feedback. Claude’s responses require less correction because the pattern has been clearly established.

Technique 5: The Session Brief — Solving the Memory Problem

Claude has no memory between separate conversations. Every new chat starts completely fresh. For anyone working on ongoing projects, this is the most frustrating limitation of the tool.

The practical solution is a session brief: a short paragraph (5–7 lines) that you paste at the very start of every new conversation to restore Claude’s understanding of your project. Mine looks like this:

| My Session Brief: This is ClaudeAIWeb.com—a fan blog about Claude AI for non-technical readers. Tone: conversational, short paragraphs, no jargon. Current project: a series of practical guides about using Claude for real work. Style reference: think Wirecutter review meets personal blog. Today’s task: [insert task here]. |

It takes 20 seconds to paste. It eliminates the drift that happens when Claude has no project context. Over a month of daily use, this single habit saved me more time than any other technique on this list.

What Actually Changed After 60 Days of Structured Training

After applying these techniques consistently, here is what I measured across the same types of tasks—before and after:

| Area | Before Training | After Training (Same Prompt) |

| Tone | Formal and slightly robotic—felt like a press release | Conversational but authoritative—matched my blog’s voice |

| Length | Consistently overlong—padded with filler sentences | Tight and purposeful—no unnecessary sentences |

| Structure | Random paragraph breaks, no clear flow between sections | Logical progression with clear topic sentences per paragraph |

| Clarification requests | Frequent—Claude asked for more detail on almost every prompt | Rare — understood context from the session brief alone |

| Repetition | Often restated the intro idea in the conclusion | Conclusions added a new perspective rather than recycling points |

The most important number: I went from spending an average of 45 minutes editing each Claude-assisted article to spending around 18 minutes. That is not a minor efficiency gain. For a solo content operation, it is the difference between publishing twice a week and publishing every day.

What did not change: Claude’s knowledge cutoff, its inability to retain memory between sessions, and its tendency to be overly cautious on certain topics. Training improves the quality of Claude’s output within its existing capabilities. It does not expand those capabilities.

Training Mistakes I Made (And the Cost of Each One)

These are real errors from my first month of testing. I’m documenting them specifically because they represent the most common pitfalls based on how I see other users describe their Claude frustrations online:

| Training Mistake | What Actually Happened | How I Fixed It |

| Used open-ended role prompts like ‘Act as an expert.’ | Got polished but generic output—sounded like a Wikipedia intro every time | Added audience + constraint: ‘Act as an editor reviewing work for a non-technical reader under 25.’ |

| Gave feedback like ‘make it better.’ | Claude changed random things—sometimes worse, sometimes just different | Switched to specific corrections: ‘The second paragraph repeats the intro. Cut it and start directly with the example.’ |

| Mixed tones in the same session | Claude started blending formal and casual language mid-article, unpredictably | Started each session with a single-tone declaration and never switched it mid-chat |

| Uploaded a raw 4,000-word document as context | Claude focused on the wrong sections and missed my actual intent | Summarized the document into a 200-word brief and used that instead |

| Expected training to carry over to new chats | Opened a new session, and Claude reverted completely to defaults | Created a reusable 5-line session brief I paste at the start of every new conversation |

The underlying pattern in every mistake: I was being imprecise. Claude is not bad at following instructions. It is very good at following instructions literally. When my instructions were vague, Claude followed the vague version of them precisely, which is exactly why the output felt wrong.

Who Should Actually Invest Time in Training Claude

Training Claude takes deliberate effort up front. It is not worth doing for every casual use case. Based on my experience, structured training pays off when:

- You use Claude for the same type of task repeatedly—writing, research summaries, customer communication

- You have a defined voice or style that Claude keeps missing by default

- You are producing content at volume, and manual editing time is a real cost

- You work on long-term projects that span multiple sessions

Training is probably not worth the investment if you use Claude occasionally for one-off questions, if your tasks vary wildly in format and purpose, or if you are already satisfied with the default output quality.

For blog operators, content teams, researchers, and anyone doing repetitive structured work, the upfront investment pays back within the first week.

The Honest Limitations of Training Claude

No guide about training Claude should skip this part. There are real ceilings on what training can achieve, and understanding them prevents wasted effort.

Training cannot give Claude real-time information. Its knowledge has a training cutoff, and no amount of prompt engineering changes can change that. If your work depends on current events or live data, Claude is not the right tool for that part of the job, regardless of how well you train it.

Training cannot override Claude’s safety guidelines. There are categories of content Claude will not produce, and structured prompts will not change that. This is a feature, not a flaw — but it is worth knowing before you design a workflow around a use case that Claude declines.

Training cannot fully compensate for a weak prompt. The techniques in this guide reduce the gap between a mediocre prompt and a good output. They do not eliminate it. The single highest-leverage thing you can do to improve Claude’s output is still to write a better prompt. Training amplifies good prompts. It does not rescue bad ones.

Final Thoughts: Is Training Claude Worth It?

After 60 days of deliberate testing, my answer is yes — with one condition. It is worth it if you treat it as an ongoing practice rather than a one-time setup.

The biggest shift in my workflow came from combining just two techniques: a consistent system prompt and a session brief. Those two habits alone eliminated the majority of my editing time and made Claude’s output feel reliably on-brand within a few days.

The other techniques — few-shot examples, constraint-based prompting, and structured feedback — added incremental improvements on top of that foundation. None of them worked as a standalone fix. Together, they turned Claude from a capable but generic tool into something that genuinely fits how I work.

That is the real goal of training Claude: not to make it smarter, but to make it consistent. Consistency, at scale, is what actually saves time.

| Bottom line: Start with a system prompt and a session brief. Use them for two weeks. If you notice consistent improvement, layer in the other techniques. If you don’t, the prompts need work before the other techniques will help. |

FAQs

Does training Claude permanently change how it responds?

No. Claude does not retain memory between separate conversations. Every new chat starts fresh. What you are doing when you ‘train’ Claude is establishing a strong context within a session—not modifying the model itself. The session brief technique described in this guide is the most practical workaround for this limitation.

How long does it take to see a real improvement in output quality?

With a well-written system prompt, you will notice a difference immediately — within the same session. Broader consistency across different tasks takes longer, typically one to two weeks of using the same prompts and refining them based on what is still missing. The improvement is not linear; the biggest gains come early, and refinement gets slower as quality improves.

Can I train Claude to write in my specific style without uploading my articles?

Yes. The most effective approach is to describe your style in concrete, measurable terms inside the system prompt—paragraph length, tone, banned phrases, and structural patterns—rather than uploading examples. Uploading examples helps, but a precise written description of your style requirements often outperforms a pile of sample articles because it forces you to articulate what you actually want.

What is the most common reason training Claude does not work for people?

Inconsistency. Users who give different instructions in different sessions, mix tone requirements mid-chat, or use vague feedback like ‘make it better’ never build a stable pattern. Claude adapts to what you give it. If your inputs are inconsistent, the outputs will be too. The fix is standardizing your opening system prompt and using it every single session without variation.

Is there a difference between training Claude for personal use versus a business workflow?

The techniques are the same, but the stakes and complexity are higher for business use. For a personal blog like mine, one system prompt covers most needs. For a business workflow with multiple content types, multiple audiences, or multiple team members using the same Claude setup, you will need separate, clearly labeled session briefs for each use case. The principle is identical — the scope is larger.