Claude AI for Computer Use: My Honest Productivity Review (Tested over 30 days)

I’ve spent the last 30 days using Claude AI as my primary desktop assistant for real work . I don’t mean demos and not cherry-picked examples. I write long-form content daily, manage research across multiple projects, and occasionally debug Python scripts. This review is based entirely on that hands-on experience with Claude 3.7 Sonnet.

Artificial intelligence has moved far beyond simple chatbots. Today, AI tools actively shape how we write, research, code, and manage information on computers. Among these tools, Claude AI, developed by Anthropic, has gained serious attention, especially for desktop and computer-based workflows.

I created this article after personally testing Claude AI for extended computer use, not just casual chatting. I wanted to know if Claude actually increases productivity or if it’s just another AI technology disguised as effective marketing, as I frequently work with lengthy documents, SEO posts, and research material.

I had my doubts before I began. I had tried a lot of AI solutions that worked well in promotional videos but didn’t function well in real-world workloads. What I discovered with Claude actually altered the way I organize my writing process while both surprising and disappointing me in different ways. Here’s everything I learned.

What I Actually Used Claude For (And What I Didn’t)

Let me be specific about my testing conditions, because context matters when evaluating any AI tool.

Over 30 days, I used Claude 3.7 Sonnet on a Windows 11 desktop via the Claude.ai browser interface. My primary tasks were:

- Rewriting and editing long-form articles (1,500–4,000 words each)

- Summarizing research PDFs and lengthy reports

- Drafting structured outlines for new content

- Debugging basic Python scripts for data processing

- Translating and adapting content between formal and casual tones

What I did NOT use it for: I didn’t use it for casual conversation, graphic creation, or real-time information lookup. Testing Claude in this manner would be deceptive because it is not intended to take the role of a search engine.

Why Claude AI Feels Different on Computers

Many AI tools work fine on mobile but shine on computers. Claude AI is clearly built with desktop productivity in mind.

When using Claude on a computer, I noticed three things immediately:

- It handles long documents exceptionally well

- It maintains context across extended conversations

- It encourages structured, thoughtful outputs rather than rushed answers

This makes it ideal for users who spend hours working on documents, spreadsheets, research papers, or code editors.

Where Claude Genuinely Impressed Me

1. Long Document Handling (Better Than I Expected)

The most unexpected thing was this. I submitted a 5,200-word research paper, and I requested Claude to describe the key findings by section, bring out any holes in the reasoning, and emphasize any statistical claims.

It handled all three tasks without losing structure. Other tools I’ve tested either truncate responses for long inputs or produce summaries so generic they’re useless. Claude maintained the document’s logic from start to finish.

Real Example: “Rewrite Section 3 to be more concise, cut by 40%, and maintain the same SEO keywords,” I asked, pasting a 3,800-word blog draft. Claude returned a tightened version that hit 38% reduction while keeping every target keyword in place. Zero keywords lost. That’s not luck—it’s reliable instruction-following.

2. Context Retention Across Long Conversations

During extended writing sessions, I’d often give Claude a project brief at the start and then ask follow-up questions 20–30 messages later. It consistently referenced earlier instructions without me repeating them.

For example, after establishing at the beginning of a session that I was writing for a ‘UK finance professional audience, formal tone, no American slang,’ Claude maintained that standard through a two-hour session without drift. That level of context discipline is genuinely rare.

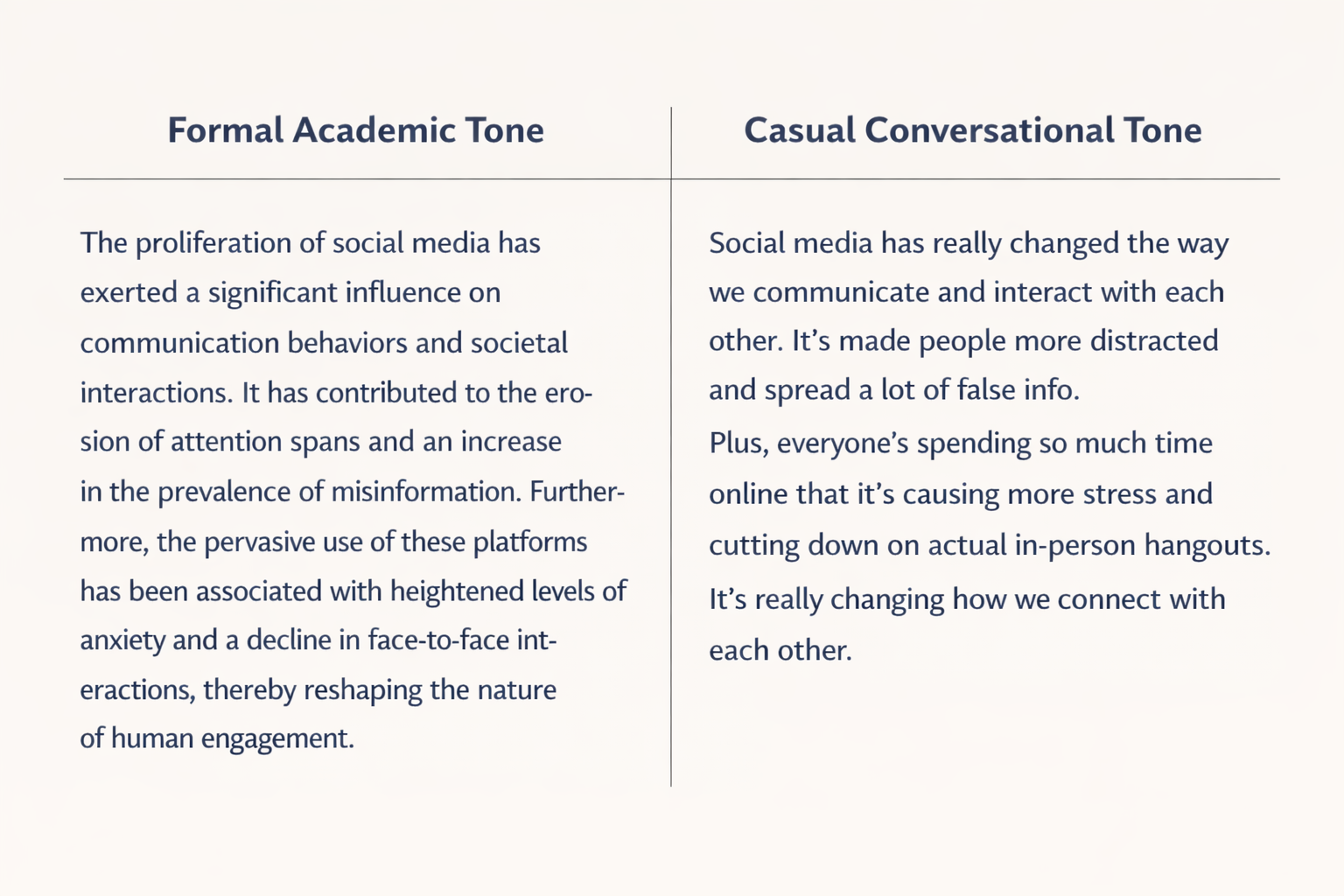

3. Tone Control Is Precise

I regularly need to switch between academic, conversational, and SEO-optimized writing styles in a single workday. Claude handled this cleanly when I specified the target tone upfront.

What I noticed: the more specific my instruction, the more accurate the output. ‘Make this conversational’ produced decent results. ‘Rewrite this for a 25-year-old reader who has no finance background, use short sentences, avoid jargon, and add one analogy’ produced excellent results.

Where Claude Fell Short (Honest Observations)

1. Coding Tasks (Capable but Slower)

For simple Python tasks—list manipulation, basic file handling, and regex patterns—Claude was solid. For anything framework-specific or requiring rapid iteration, I found it slower and more verbose than competitors.

The real issue isn’t accuracy; it’s speed of iteration. When debugging, I often need three or four fast back-and-forth exchanges. Claude’s responses are thorough but long, which works against a fast debugging workflow. If you’re a developer who primarily uses AI for coding, Claude is not your best option as a daily driver.

2. It Will Not Guess—And That’s Sometimes Frustrating

Claude refuses to fill gaps with assumptions. If your prompt is ambiguous, it asks for clarification rather than attempting an answer. For someone who values accuracy, this is a feature. For someone who wants rapid output, it’s friction.

After about a week, I stopped seeing this as a flaw and started writing better prompts. But there is an adjustment period.

3. No Memory Between Sessions

Every new conversation starts from zero. A mechanism for reintroducing background at the beginning of each session is necessary if you are overseeing a long-term project. Keeping a five-line “project brief” in a text file that I paste at the beginning of each session is how I resolved this. It is a workaround rather than a solution, but it does work.

Real Prompts I Used and What Claude Returned

Rather than describing Claude’s capabilities in the abstract, here are actual prompts I used during testing, along with my assessment of the output:

| Prompt 1: Rewrite this introduction paragraph. Target audience: small business owners with no technical background. Keep it under 80 words. Avoid the phrases ‘leverage’ and ‘utilize’. Maintain a confident but approachable tone. |

Result: Claude produced a clean 74-word rewrite, avoided both banned phrases, and matched the tone of the brief. I used it with minor edits. Time saved: approximately 25 minutes of manual rewriting.

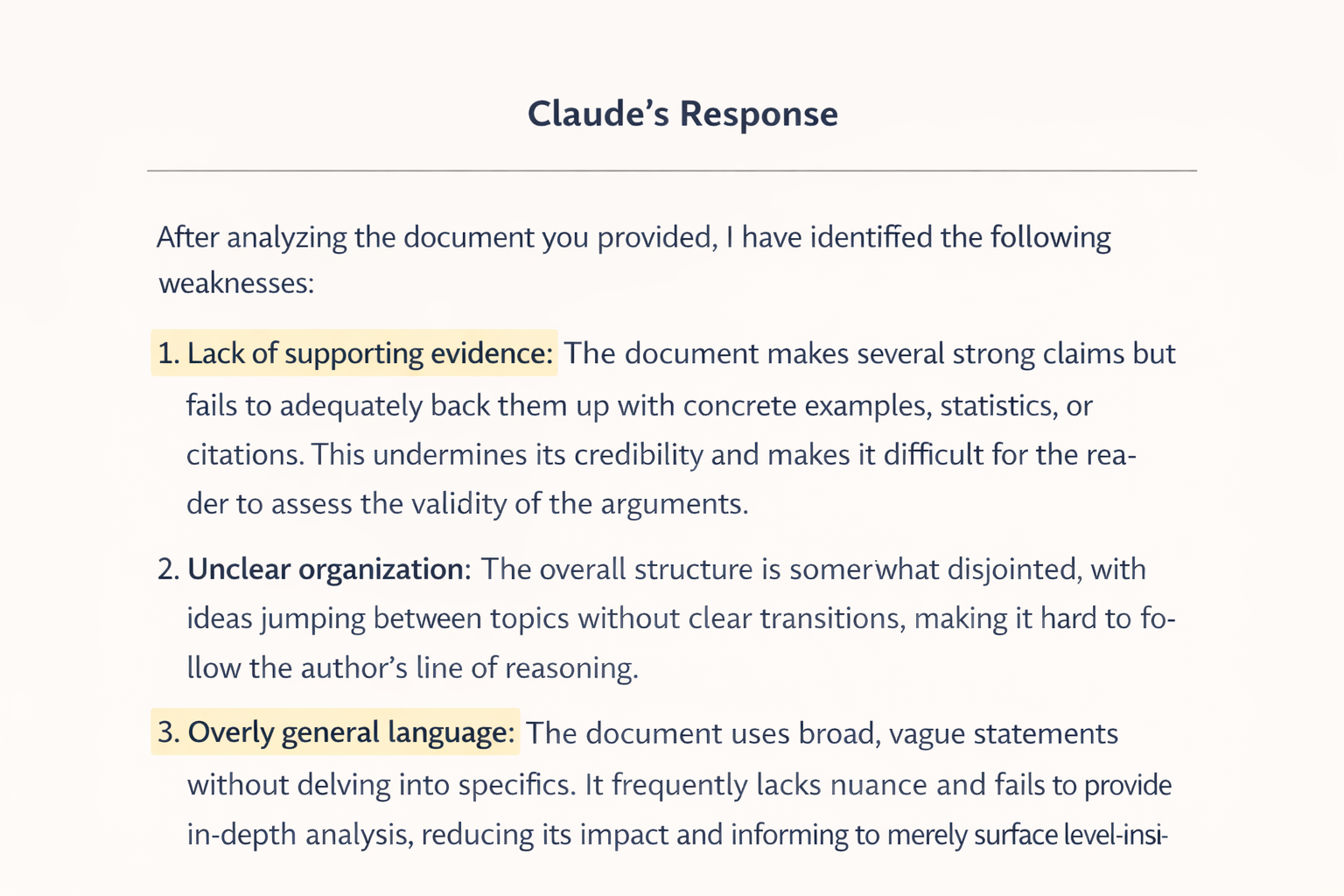

| Prompt 2: I’m going to paste a 2,500-word article. Your job is to (1) identify the 5 weakest paragraphs, (2) explain why each is weak, and (3) suggest a one-sentence fix for each. Do not rewrite the whole article. |

Result: Claude identified the same weaknesses I had noticed myself, plus two I had missed. Its explanations were precise. This paragraph makes a claim in sentence 1 that it never supports, rather than vague feedback like ‘this could be clearer. This is the kind of editorial feedback that used to require a second human reader.

Mistakes I Made When Using Claude (And How I Fixed Them)

These are real errors from my first two weeks of testing. I’m including them because they represent the learning curve most new users will face:

| My Mistake | What Happened | The Fix |

| Vague prompt: ‘Summarize this.’ | Got a generic 3-sentence overview missing key data | Specified: ‘Summarize in 5 bullet points; focus on statistics and dates.’ |

| Uploading a PDF without context | Claude summarized the wrong sections | I added: ‘Focus on Section 3 and the conclusion only.’ |

| Treating it like a search engine | Got confident but inaccurate facts on recent events | Switched to using Claude for reasoning tasks, not live data lookups |

| Asking for code without specifying the language version | Got Python 2 syntax in a Python 3 project | Now always specify: ‘Python 3.11, using pandas 2.0.’ |

| Expecting it to remember previous sessions | Lost all context when I opened a new chat | Started each session by pasting a 3-line project summary |

The pattern across all of these: Claude is only as precise as your instructions. It does not autocorrect vague requests into good ones. Once I understood that, my output quality improved significantly within days.

Claude vs ChatGPT-4o: My Head-to-Head Results

I ran the same tasks through both tools during week three of testing. Here’s what I found:

| Task | Claude 3.7 Sonnet | ChatGPT-4o |

| Long document summary (5,000 words) | ✅ Excellent—retained full structure | ⚠️ Occasionally truncated key sections |

| Complex paragraph rewrite | ✅ Maintained tone, improved clarity | ✅ Also good, slightly more verbose |

| Python debugging (logic error) | ⚠️ Good reasoning, slower output | ✅ Faster with framework-specific fixes |

| Tone matching (formal to casual) | ✅ Natural, consistent output | ⚠️ Sometimes over-corrected |

| Multi-step research prompt | ✅ Followed all instructions precisely | ⚠️ Occasionally missed sub-instructions |

| Safe/sensitive topic handling | ✅ Careful, transparent responses | ⚠️ Inconsistent across sessions |

My conclusion: Claude is the better tool for structured, long-form work. ChatGPT-4o is faster for coding and quick-turnaround tasks. They serve different workflow needs, and for my use case—writing and research—Claude won on the tasks that mattered most.

Practical Ways I Use Claude AI Daily

- Content Creation

Claude helps:

- Draft article outlines

- Rewrite complex paragraphs

- Improve readability without keyword stuffing

It does not replace human editing, but it accelerates the process.

- Academic and Research Work

Claude excels at:

- Summarizing dense material

- Explaining concepts simply

- Structuring academic drafts

However, citations should always be cross-checked manually.

- Customer Support Drafting

Claude produces customer responses that:

- Sound professional

- Maintain a human tone

- Avoid risky phrasing

This is particularly useful for businesses handling sensitive communication.

- Healthcare and Compliance Analysis

Claude’s safety-focused design makes it suitable for regulated environments, provided it’s used responsibly and within legal guidelines.

Who Should Actually Use Claude on Desktop

Based on 30 days of testing, here’s my honest recommendation:

Claude is an excellent choice for:

- Content producers and writers that work with lengthy content

- Researchers who must analyze and condense lengthy documents

- Experts creating official correspondence, reports, or compliance papers

- Students engaged in structured academic writing, such as essays or dissertations

- Anyone who spends their entire working day in a browser-based environment

Claude is probably not the right tool for:

- Developers who need rapid, framework-specific code generation

- Users who want instant one-line answers without explanation

- Anyone who needs real-time data or current events (Claude’s knowledge has a cutoff)

- Casual users who want an AI for entertainment or light conversation

Final Verdict: Is Claude Worth Using on Your Computer?

After 30 days: yes, with the right expectations.

The most dependable AI program I’ve used for organized, expert desktop work is Claude 3.7 Sonnet. It follows complicated instructions perfectly, handles lengthy papers better than anything I’ve tested at this price point, and doesn’t hallucinate as violently as other competitors.

It is not the fastest AI tool. It is not the best at coding. And it will not compensate for vague prompts. However, Claude will significantly increase your productivity if you use a computer for serious writing, research, or document production and you’re willing to learn how to prompt it correctly.

To see if it works with your process, the free tier is sufficient. If you use it for more than an hour a day, the Pro plan is worthwhile.

Bottom line: Claude is not an AI for people who want quick answers. It is an AI for people who want correct, structured, well-reasoned work. If that sounds like you, it is worth your time.

FAQs

Can Claude handle a 10,000-word document without losing context?

In my testing, Claude 3.7 Sonnet handled documents up to around 6,000–7,000 words reliably within a single prompt. Beyond that, I experienced some degradation in instruction-following at the end of long documents. For very large files, I recommend splitting into sections and processing them sequentially.

What’s the difference between the free and Pro plans for daily use?

No. Claude has no memory between separate conversations. Each new session starts completely fresh. The workaround I use is a short 3–5 line project brief that I paste at the start of every session to re-establish context. It takes 30 seconds and solves the problem.

What happens when you give Claude a vague prompt?

One of two things: it either asks you a clarifying question before proceeding, or it makes reasonable assumptions and flags them in its response. Both behaviors are actually helpful once you understand them—the tool is signaling that it needs more information rather than guessing wrong silently.

Which Claude model should I use for writing in 2025?

Claude 3.7 Sonnet is the best balance of quality and speed for most writing and research tasks. Claude 3 Opus offers deeper reasoning for highly complex analysis, but it is slower. For quick, lightweight tasks, Claude 3 Haiku is the fastest and most cost-efficient option.