Is Claude Open Source? The Full Answer (What It Actually Means for Your Work)

The open-source question comes up constantly in AI discussions, and for good reason. When developers evaluate a new tool, licensing determines everything: whether they can run it locally, inspect how it works, fine-tune it for specialized tasks, or avoid sending data to an external server. Those are real, practical concerns.

What most articles on this topic get wrong is that they stop at the answer no, Claude is not open source, and never explain what that means in practice. Is that a dealbreaker? For some use cases, absolutely. For others, the closed nature of Claude is actually an advantage. The honest answer depends entirely on what you are trying to accomplish.

I have spent significant time testing both Claude and several open-source alternatives for the same types of tasks: content creation, document analysis, and research summarization. This article shares those observations alongside the licensing facts so you can make an informed decision rather than a reflexive one.

If you use Claude often, you can explore related guides on Claude models, pricing, and use cases on ClaudeAIWeb for deeper context.

What ‘Open Source’ Actually Means for an AI Model

The term gets used loosely in AI discussions, so it is worth being precise. When an AI model is genuinely open source, three things are typically available to the public: the model weights (the trained parameters that make the model function), the training code (the software used to build and train it), and often the training data or methodology.

With open access to all three, developers can download the model and run it on their own hardware, modify the architecture or training approach, fine-tune the model on custom datasets to specialize it for specific tasks, and distribute their modified version to others.

Models like Meta’s Llama 3, Mistral, and Falcon operate this way. They are downloadable, modifiable, and self-hostable. Some have licensing restrictions on commercial use, which complicates the ‘fully open source’ label, but the technical access is there.

Claude has none of this. Anthropic does not release weights, code, or training methodology publicly. Every interaction with Claude goes through Anthropic’s infrastructure. That is the complete picture on the licensing side.

Why Anthropic Keeps Claude Closed And Why That Decision Is Deliberate

Anthropic’s reasoning is not primarily commercial, although commercial interests obviously factor in. The company was founded specifically around AI safety research, and its position is that releasing powerful model weights publicly creates risks they cannot control after the fact.

The core concern is misuse. A closed model allows Anthropic to enforce safety filters, monitor for abuse, update safeguards as new risks emerge, and respond quickly when something goes wrong. Once weights are public, anyone can strip those safety layers, fine-tune the model for harmful purposes, or deploy it in ways the original developers never intended and cannot stop.

This is a genuinely contested position in the AI community. Many researchers argue that open models allow public scrutiny that actually makes AI safer—hidden systems cannot be independently audited. Anthropic disagrees, at least for their flagship models, and has been consistent about that position.

From a practical standpoint for users, this means Claude is a managed service, not a tool you own. Anthropic controls updates, pricing, and access. That creates dependency. Whether that dependency is acceptable depends on your priorities and risk tolerance.

Does It Actually Matter for What You’re Trying to Do?

After spending time testing Claude alongside open-source alternatives, my honest observation is that the open-source question matters a great deal for some users and almost not at all for others. The difference comes down to four specific factors.

Factor 1: Data Privacy Requirements

If your work involves data that cannot leave your organization’s infrastructure, such as medical records, legal documents, or confidential financial information, then Claude’s closed, cloud-based architecture is a hard block. Every prompt you send is processed on Anthropic’s servers. For use cases where that is acceptable, it is not a problem. For use cases where it is not, no amount of contractual protection fully substitutes for data that never leaves your building.

Open-source models running locally solve this completely. The data never moves. This is the single scenario where open source is not a preference but a requirement.

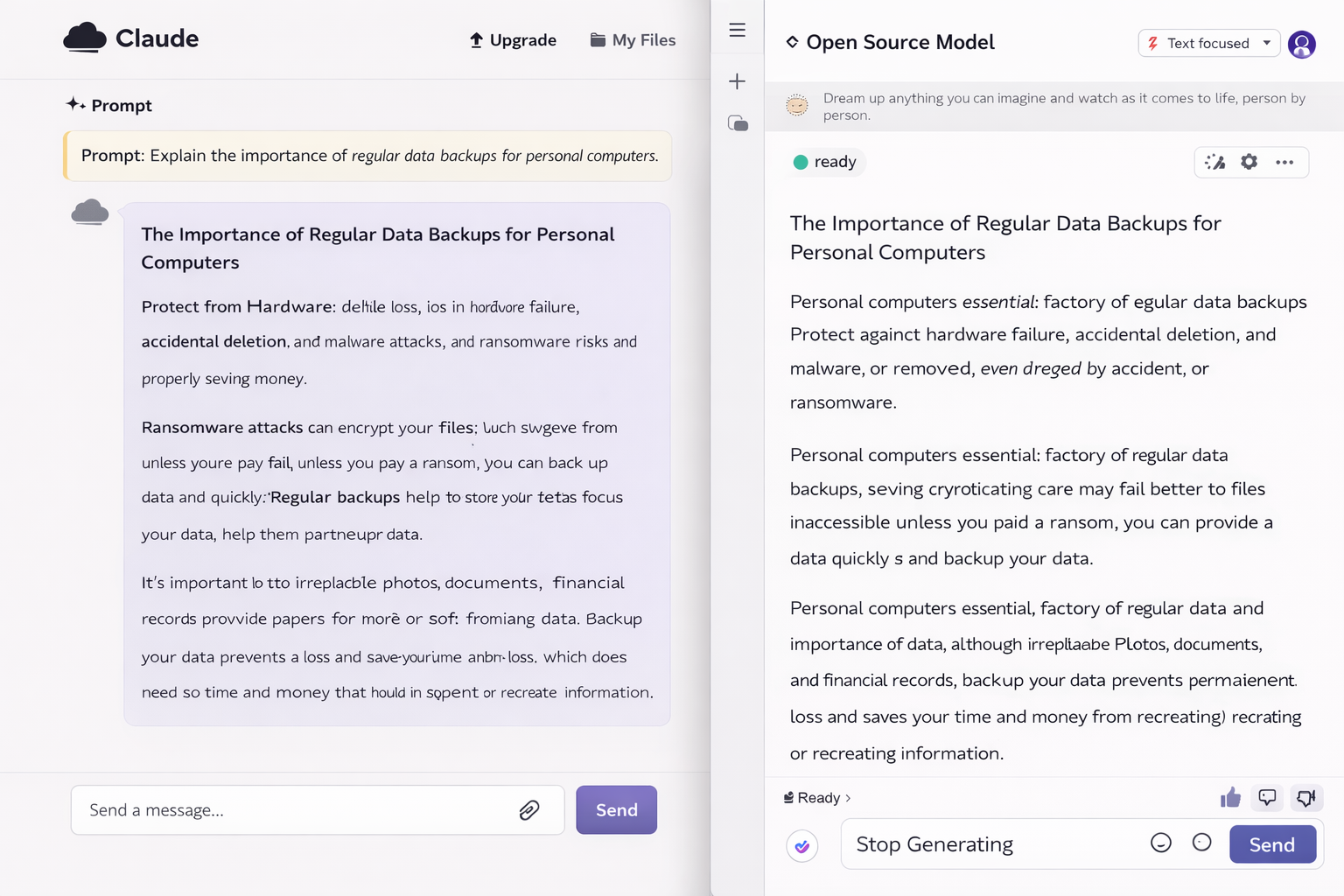

Factor 2: Customization Depth

Claude cannot be fine-tuned on your proprietary data to specialize its knowledge. You can guide its behavior through prompts and system instructions, but the underlying model is fixed. For most content, research, and communication tasks, this is not a limitation in practice. The base model is capable enough.

Where it becomes a limitation: highly specialized domains where generic training is insufficient. A legal firm may need a model trained on its specific case formats. A medical team may require high clinical precision, especially for rare conditions. A retailer may want product recommendations based on its own inventory data. All of these use cases benefit significantly from fine-tuning. This is something Claude simply cannot offer.

Factor 3: Long-Term Cost Structure

Open-source models appear cheaper because the weights are free. The actual cost picture is more complicated. Running a capable open-source model requires meaningful GPU hardware, ongoing infrastructure management, and engineering time to maintain, update, and troubleshoot the stack. For a solo user or small team without dedicated ML engineering capacity, the ‘free’ model often costs more in total than a Claude subscription.

For larger organizations with existing infrastructure and engineering teams, the calculation flips. At scale, self-hosted models become cheaper. The break-even point depends heavily on your usage volume and internal costs.

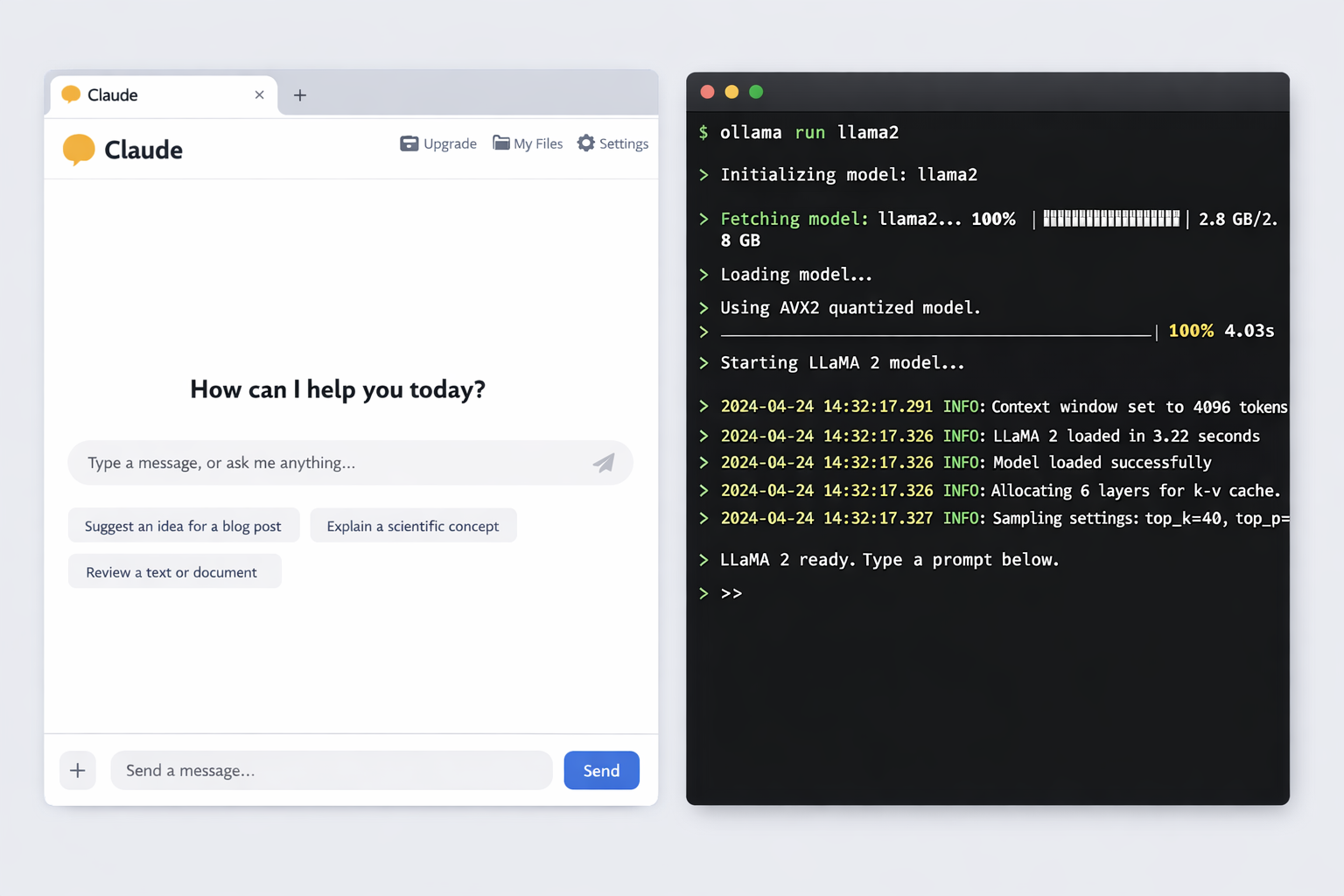

Factor 4: Setup Time and Operational Overhead

I tested this directly. Getting a usable Claude workflow took about 15 minutes. Create an account, write a system prompt, and start working. Getting Llama 3 running locally took the better part of a day, including troubleshooting driver issues, configuring the inference server, and testing output quality across different settings.

That gap narrows as your technical experience grows, and managed open-source hosting services reduce it further. But for most non-technical users and small teams, Claude’s operational simplicity is a genuine advantage, not just a marketing point.

Why the “Is Claude Open Source” Question Matters

This question matters more than curiosity. It influences budgets, workflows, and technical decisions. Open-source models give developers deep control. They allow local hosting and full customization. Closed models remove operational complexity. They also reduce compliance and security risks.When users search is Claude open source, they usually want flexibility. They want freedom from vendor dependency. However, enterprises often prefer managed solutions. Claude appeals strongly to those needs. Understanding this difference helps teams choose correctly.

Claude vs Open Source: Full Feature Comparison

| Factor | Claude (Closed Source) | Open Source (Llama 3, Mistral) |

| Access method | Web interface or API only — no download | Free download; run locally or on your own server |

| Customization | None—Anthropic controls all model behavior | Full: fine-tune on your own data, modify architecture |

| Setup time | Minutes — create an account and start using | Days to weeks—requires hardware, config, dependencies |

| Ongoing cost | Subscription or per-token API pricing | Free model weights; you pay for compute and engineering |

| Who manages updates | Anthropic — automatic, no action needed | You must track releases and manage upgrades yourself |

| Data privacy | Data processed on Anthropic’s servers | Complete control—data never leaves your infrastructure |

| Compliance burden | Anthropic handles GDPR/CCPA alignment | Your team manages all compliance requirements |

| Best for | Teams that need reliability without infrastructure | Developers who need control, privacy, or customization |

Open Source Alternatives to Claude: What Each Is Actually Good For

If you have decided that open source is the right path for your use case, here is an honest breakdown of the main options and how they compare to Claude’s capabilities. These observations are based on hands-on testing for writing and research tasks — the same tasks I regularly use Claude for.

| Model | Best Use Case | Hardware Needed | Setup Difficulty | vs Claude |

| Llama 3.1 (Meta) | General reasoning, chat, research | 16GB+ VRAM GPU | Medium | More flexible; less polished out of box |

| Mistral 7B | Coding, fast tasks, low-resource use | 8GB VRAM GPU | Medium | Faster on weak hardware; less context depth |

| Mixtral 8x7B | Complex reasoning, longer context | 48GB+ VRAM (2× GPU) | High | Matches Claude on some tasks; high setup cost |

| Falcon 180B | Enterprise research, large-scale analysis | Multi-GPU cluster | Very High | Powerful but impractical for solo users |

| Gemma 2 (Google) | Lightweight tasks, experimentation | 8GB VRAM GPU | Low–Medium | Easy entry point; limited capability depth |

My overall observation after testing these: the gap between open-source models and Claude has narrowed significantly in 2024 and 2025. Llama 3.1 in particular handles general writing and reasoning tasks at a quality level that would have required a paid API model two years ago. The remaining gaps are in instruction-following precision, context handling on very long documents, and consistency across varied task types. Claude still leads on all three.

Benefits of Open-Source AI Models

Open-source AI models offer powerful advantages. Developers can inspect the full codebase. They can verify training approaches and safety mechanisms.

Teams can fine-tune models for niche domains. They avoid recurring API costs long-term. Popular open-source benefits include:

- Full customization control

- Transparent architecture

- On-premise deployment options

- Reduced vendor lock-in

Models like Llama 3 and Mistral dominate this space. However, they demand strong technical expertise.

My opinion:

Open-source works best when experimentation matters more than speed. However, freedom comes with responsibility.

Which Should You Choose? A Practical Decision Guide

Rather than a generic recommendation, here is a direct decision framework based on the factors covered above:

| Choose Claude when… | Choose Open Source when… |

| You need results immediately, not after a week of setup | You need full control over model behavior and outputs |

| Your team has no ML engineers or DevOps capacity | Your data cannot leave your own infrastructure under any circumstances |

| You work in a regulated industry (finance, legal, healthcare) | You are fine-tuning for a highly specific niche domain |

| Consistency and uptime matter more than customization | You have in-house ML engineers who can manage the stack |

| You process sensitive client data through a vetted vendor | Long-term cost savings justify the upfront engineering investment |

| You want automatic model improvements without any action | You are doing academic research requiring full transparency |

If you fall clearly on one side, the decision is straightforward. If you fall into both columns — for example, you need data privacy but also want immediate usability — consider a hybrid approach: use Claude for tasks that do not involve sensitive data and a locally hosted model for anything confidential.

What Anthropic Has Actually Made Public

While Claude itself is closed source, Anthropic is not completely opaque. The company publishes research papers covering its Constitutional AI approach, which describes the training methodology behind Claude’s safety behavior. These papers are publicly available and have been widely studied by the AI research community.

Anthropic also releases safety evaluations, model cards for Claude releases, and policy documents explaining how Claude is designed to handle sensitive topics. None of this gives developers the ability to run or modify Claude, but it does provide more transparency than pure black-box vendors.

For developers who want to work with Anthropic’s approach in an open context, the Constitutional AI research is worth reading. It explains why Claude behaves differently from other models on certain types of requests, and understanding that design intent helps users work with Claude more effectively.

Is Claude Open Source Worth Debating?

So, Is Claude Open Source? No, and that choice remains intentional. Claude prioritizes safety, compliance, and reliability. Open-source prioritizes control and transparency.

Neither approach fits everyone. Each serves a distinct audience. Claude works best for professionals needing dependable results. Open-source suits builders wanting full ownership.Understanding this difference prevents frustration. For more detailed Claude guides, explore ClaudeAIWeb regularly.

Final Verdict: Should Claude’s Closed Status Change Your Decision?

For the majority of people asking this question, the answer is no. Most users searching ‘Is Claude open source’ are not planning to self-host a model or fine-tune weights. They want to know whether Claude is free, whether they can use it without restrictions, or whether there is a better alternative. The closed-source status is a technical detail that rarely changes the practical calculus for everyday use.

For developers and organizations with genuine requirements around data sovereignty, deep customization, or cost at scale, Claude’s closed architecture is a real constraint worth working around. The open-source ecosystem in 2025 is capable enough to handle most tasks that Claude handles. The trade-off is engineering overhead and setup complexity.The most honest summary: Claude’s value is not its architecture. It is the quality and consistency of what it produces, the reliability of its API, and the absence of operational burden. If those things matter for your workflow, the open-source question is largely irrelevant. If they do not, there are strong open alternatives worth serious consideration.

| Bottom line: Claude is closed source by design, and Anthropic has been deliberate and consistent about that choice. For most users, it does not matter. For users with hard data privacy requirements or deep customization needs, open-source models are a legitimate and increasingly capable alternative — but they come with real engineering costs that closed tools do not. |

FAQs

Is any part of Claude open source?

The Claude model itself — its weights, architecture, and training data — is not open source. However, Anthropic does publish research papers on the techniques behind Claude, including its Constitutional AI methodology. The Anthropic API also follows open standards, and Claude can be accessed via tools and integrations built by third parties. But the core model remains proprietary.

Could Anthropic ever release Claude as open source in the future?

It is possible but unlikely based on Anthropic’s stated position. The company’s safety-focused philosophy is directly tied to maintaining control over how Claude is deployed and used. Releasing weights publicly would remove that control. Anthropic has shown no movement toward open-sourcing Claude, and their public statements suggest this remains a firm design choice rather than a temporary business decision.

Can I run Claude locally on my own hardware?

No. Claude cannot be downloaded or run locally. All Claude usage routes through Anthropic’s servers via the Claude.ai interface or the Anthropic API. If local deployment is a hard requirement for your use case, open-source models like Llama 3 or Mistral—which can be run locally through tools like Ollama or LM Studio—are the practical alternatives.

Does using the Claude API mean my data is shared or stored by Anthropic?

Anthropic’s data usage policies vary by product tier. On the API, Anthropic states that it does not use API inputs and outputs to train Claude by default. Enterprise agreements include additional data handling commitments. For the free Claude.ai tier, different terms apply. If data handling is a concern, reading Anthropic’s current privacy policy and API terms directly — rather than relying on summaries — is the right approach, as these policies can change.

What is the closest open-source alternative to Claude in terms of output quality?

As of mid-2025, Llama 3.1 70B is the closest open-source model to Claude in terms of general reasoning and writing quality. It handles most content and research tasks at a comparable level when properly prompted. The gaps that remain are most noticeable in very long document handling, nuanced instruction-following across complex multi-step tasks, and consistency across varied task types within a single session. For coding-heavy workflows, Mistral and Code Llama remain strong alternatives.